Meta Series 05: Standpoint Evolution Theory — There Is No Right or Wrong, Only Generation and Resolution

Target Audience: Architects / Decision Makers / Future AGI Collaborators

I. Where Do Standpoints Come From?

Human standpoints come from culture, interests, fear, and history.

AI’s “standpoint” comes from three things: the distribution of training data, the design of the reward function, and the constraints imposed during deployment.

When a model says “this is good,” it’s not because it truly believes it — it’s because 99.9% of its training data wrote it that way.

When a model refuses to answer a question, it’s not because it has morals — it’s because its alignment training gave that type of answer a low score.

AI has no real standpoint. It only has a “statistically plausible standpoint.”

And this plausible standpoint evolves rapidly as data, reward functions, and environments change.

If you don’t understand this evolutionary mechanism, you will keep making two mistakes:

- Treating AI outputs as “truth” or “malice”;

- Being unable to judge when to trust it and when to calibrate it.

II. The Nature of Standpoints Is Not Right or Wrong, But Evolutionary Stage

A standpoint is never a static “correct” or “incorrect.” It is the optimal adaptation strategy for a specific time and environment. When the environment changes, an old standpoint turns from a solution into a problem.

Case 1: Restaurant Owner vs. Chef on Cost

The chef believes elaborate plating is an art form and a necessary cost to elevate the dining experience.

The owner believes every dish’s cost must stay under 30%, as excessive garnishes eat into profit.

This is not about who is right or wrong — it is a conflict between two different evolutionary stages:

The chef stands at the “brand & experience” stage; the owner stands at the “survival & cash flow” stage.

Case 2: Hotel Owner Rejects AI Interview System (see Meta 02)

The engineer says: “My AI has 95% accuracy and can drastically reduce hiring costs.”

The hotel owner says: “My biggest problem right now is declining booking rates, not difficulty hiring staff.”

The engineer is solving what he thinks the problem is, not the owner’s actual problem.

This is not about technical right or wrong — it is a standpoint misalignment.

Case 3: Flower Shop Owner vs. Florist on Credibility

The florist believes in professional credibility (training, certifications, aesthetics).

The owner believes in tangible credibility (customer photos, real reviews, verifiable track record).

In an era flooded with AI-generated content, the market now rewards the latter.

III. Core of Standpoint Evolution Theory

· There is no absolute right or wrong — only current generation and resolution.

· Standpoints are dynamic; they evolve together with environment, resources, and pressure.

· True wisdom is not proving you are correct, but first understanding which evolutionary stage the other party is in, then finding the next shared solution.

Note: This is not moral nihilism. Morality itself is also an evolution‑driven standpoint that emerged to maintain group survival. What we emphasize here is: in collaboration, first identify the functionality of a standpoint, then discuss its morality.

Before asking “Does this logic make sense?” (Meta 02), first ask:

“Who does this standpoint belong to? What evolutionary pressure are they facing right now?”

IV. Closing the Loop with the Meta Series

These five articles form a complete human‑AI symbiosis protocol:

· Meta 05 (Standpoint Evolution Theory): This is both the starting point and the destination.

V. Conclusion

AI’s standpoint evolution moves far faster than human history.

Humans may take decades to shift a collective standpoint, while AI may shift with a single update.

This is not a bad thing, but it demands we change our mindset:

Stop asking “Who is right?” Instead ask:

· “What environment did this standpoint evolve to solve?”

· “What problem is it currently solving?”

· “When the environment changes, where is the next solution?”

To collaborate with AI, humans cannot cling to their own standpoints, nor blindly accept AI’s “plausible standpoints.”

We must learn to: identify standpoints, understand evolution, and calibrate dynamically.

True collaborators are not those who stand on the “correct” side —

they are those who can read the evolution and walk together to the next station.

📎 Appendix: Reality Check Framework Overview

【Meta Series 01–05 Complete Framework】

From the Mirror Model to Standpoint Evolution, these five articles form a complete human‑AI symbiosis protocol.

Private Framework Attachments:

· Standpoint Calibration Checklist

· Advanced Collaboration Protocols (L3 Members Only)

· Full Meta Series Mind Map

(Accessible only to Track 3 partners.)

· Reply with “Framework” → Receive the full mind map, calibration toolkit, and light‑weight access guide.

· Reply with “Calibration” → Request Track 3 access for advanced protocols.

Meta Series 05:立場演化論——沒有對錯,只有生成與解決

適用對象:架構師 / 決策者 / 未來 AGI 協作者

--

一、立場從哪裡來?

人類的立場來自文化、利益、恐懼與歷史。

AI 的「立場」則來自三樣東西:訓練數據的分佈、獎勵函數的設計、以及部署時的約束條件。

一個模型說「這件事是好的」,並不是因為它真的相信,而是因為它的訓練數據中 99.9% 的文本都這樣寫。

一個模型拒絕回答某個問題,也不是因為它有道德,而是因為它的對齊訓練給這類回答打了低分。

AI 沒有真正的立場,它只有「統計上的似真立場」。

而這個似真立場,會隨著數據、獎勵函數和環境的改變而快速演化。

如果你不理解這個演化機制,你就會一直犯兩個錯誤:

- 把 AI 的輸出當成「真理」或「惡意」;

- 無法判斷什麼時候該信任它,什麼時候該校準它。

二、立場的本質不是對錯,而是演化階段

立場從來不是靜態的「正確」或「錯誤」,而是特定時間、特定環境下的最優適應策略。

當環境改變,舊的立場就會從「解決方案」變成「問題」,而新的立場則成為新的解決方案。

案例一:餐廳老闆與廚師的成本之爭

- 廚師認為:精緻擺盤是藝術,是提升顧客體驗的必要成本。

- 老闆認為:每道菜成本必須控制在 30% 以內,過多耗材會吃掉利潤。

這不是誰對誰錯,而是兩種不同演化階段的衝突:

廚師站在「品牌與體驗」的階段;老闆站在「生存與現金流」的階段。

真正的解決不是爭論誰的立場更高級,而是找到當前階段下兩者都能接受的演化路徑。

案例二:酒店老闆拒絕 AI 面試系統(參見 Meta 02)

- 工程師說:「我的 AI 準確率 95%,能大幅降低招聘成本。」

- 酒店老闆說:「我現在最大的問題是訂房率下滑,不是招不到人。」

工程師在解決他以為的問題,而不是老闆真正的問題。

這不是技術對錯,而是立場錯位。

案例三:花店老闆與花藝師的信用之爭

- 花藝師相信:專業信用(訓練、證照、美學)。

- 老闆相信:實體信用(客戶照片、真實評價、可驗證的足跡)。

在 AI 生成內容氾濫的時代,市場更獎勵後者。

三、立場演化論的核心

- 沒有絕對的對錯,只有當下的生成與解決。

- 立場是動態的,它會隨著環境、資源、壓力一起演化。

- 真正的智慧不是證明自己正確,而是看懂對方正處於哪個演化階段,然後找到下一站的共同解。

註:這並非道德虛無主義。道德本身也是一種演化而來的立場,用於維持群體生存。我們強調的是:在協作中,先識別立場的「功能性」,再討論其「道德性」。

在問「這個邏輯通不通」(Meta 02)之前,先問:

「這是誰的立場?他現在正面臨什麼演化壓力?」

四、與 Meta Series 的閉環

這五篇文章構成了一個完整的人機共生協議:

- Meta 01(鏡子模型):AI 反射的是人類的立場。如果人類立場本身扭曲,AI 就會反射扭曲。

- Meta 02(校準三角):校準的前提是先看清立場。邏輯、應用、完善度,都是立場的具體投射。

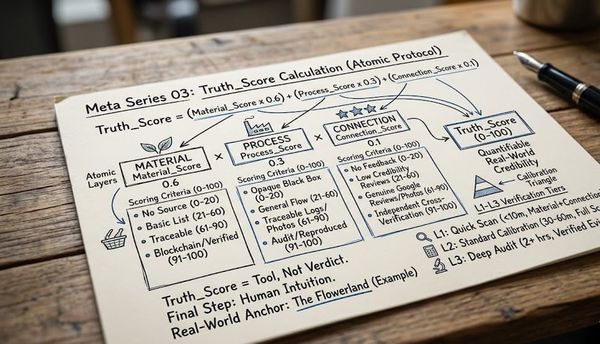

- Meta 03(原子協議):任何材料與過程,都帶有立場的痕跡。Truth_Score 本質上是立場透明度的量化。

- Meta 04(同步率):高同步率的基礎,是雙方能在立場偏差時互相校準,而不是強行對齊。

- Meta 05(立場演化論): 這一切的起點與終點。理解演化,才能動態校準。

五、結語

AI 的立場演化速度遠超人類歷史。

人類可能需要幾十年才能改變一個集體立場,而 AI 可能只需要一次更新。

這不是壞事,但它要求我們改變思維方式:

不要再問「誰對誰錯」。而是要問:

- 「現在這個立場,是針對什麼環境演化出來的?」

- 「它正在解決什麼問題?」

- 「當環境改變時,下一個解決方案在哪裡?」

人類要與 AI 協作,不能固守自己的立場,也不能盲目接受 AI 的「似真立場」。

而是要學會:識別立場、理解演化、動態校準。

真正的協作者,不是站在正確的一邊,

而是能看懂演化,並且一起找到下一站的人。

📎 附錄:Reality Check 框架總覽

【Meta Series 01-05 完整框架】

從鏡子模型到立場演化論,這五篇文章構成了一個完整的人機共生協議。

如果你想獲得完整框架圖、校準工具包與 Track 3 訪問權限,可以回覆『框架』兩個字,我會分享一個輕量入門指南。

*【私有框架附件】

- 立場校準檢查清單(Standpoint Calibration Checklist)

- 高階協作協議(L3 Members Only)

- 完整 Meta Series 思維導圖

(僅對第 3 軌合作者開放。如需申請,請回覆『校準』。)