Meta Series 02: The Calibration Triangle — When Truth Is Unavailable, What Do We Use Instead?

Before navigating the Calibration Triangle, it is essential to synchronize with the underlying logic established in our previous dispatch:

Meta Series 01: AI Vision — Things AI Cannot See

The Problem: We Never Truly Own “Complete Truth”

From Sumerian clay tablets to today’s AI outputs, humans have never possessed “complete truth” in our records.

History is filtered. News comes with agendas. Data is sampled. Even our memories are distorted by time.

Yet we still need to make decisions: what to buy, who to trust, how to act.

When “complete truth” is unavailable, what do we use instead?

The answer is not to abandon judgment, but to calibrate.

The Calibration Triangle: Three Questions Instead of One Answer

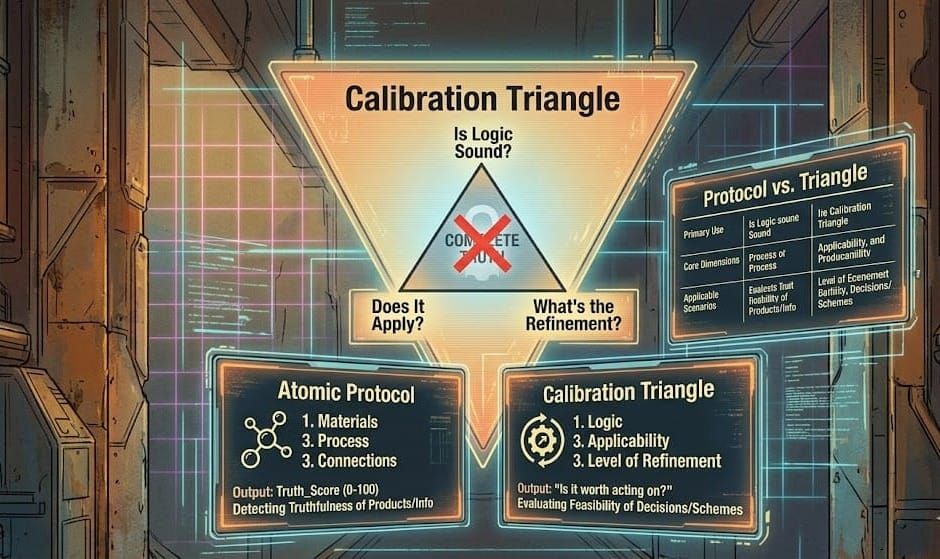

Through practice, we developed a simple framework called the Calibration Triangle.

It doesn’t ask “Is this true?”

Instead, it asks three more practical questions:

- Is the logical structure sound?

Given the same premises, does the reasoning hold? If we change one premise, how does the conclusion shift? Are there internal contradictions? - Can it be applied to the current problem?

Does this model or suggestion help us make concrete decisions, design actionable steps, or predict possible outcomes? Or does it remain only “sounds reasonable”? - What is its level of refinement?

We don’t demand 100%.

We only need a usable confidence interval.

(For example: 1 piece of field evidence = 20% base score; 3 independent nodes of cross-verification = 60% confidence interval.)

This interval comes from: How many pieces of field evidence? How many rounds of calibration? How many independent nodes have cross-verified it?

These three questions form a practical judgment framework to replace “absolute truth”.

A Real-World Case: AI Automated HR Interview System for a Hotel

A friend tried to sell a hotel an AI automated HR interview formula, claiming it could use data to calibrate the match between candidates and positions.

The hotel owner declined. Why?

Using the Calibration Triangle:

- Logical Structure: The formula may be internally consistent, but it assumes “human emotional intelligence and service attitude can be accurately predicted by offline data” — a premise that has not been validated in this hotel’s actual context.

- Applicability: The hotel’s biggest current pain point is declining room bookings and guest acquisition, not difficulty hiring staff. The solution is disconnected from the immediate problem.

- Level of Refinement: No historical interview data from this hotel was used, and no performance calibration was done on existing employees. The formula’s refinement level is close to zero.

The hotel owner’s rejection was rational. It wasn’t that AI is useless — the proposal simply hadn’t been properly calibrated to reality.

How the Calibration Triangle Relates to the Atomic Protocol

You might ask: How does this differ from the “Atomic Protocol” we discussed earlier?

The Atomic Protocol is mainly used to detect the truthfulness of products or information.

The Calibration Triangle is used to evaluate the feasibility of decisions or schemes.

The Atomic Protocol focuses on materials, process, and connections.

The Calibration Triangle focuses on logic, applicability, and level of refinement.

The Atomic Protocol outputs a Truth_Score (0–100).

The Calibration Triangle outputs a judgment of “whether it is worth acting on.”

The Atomic Protocol applies to product verification, content checking, and supply chain validation.

The Calibration Triangle applies to business decisions, scheme selection, and risk assessment.

The two are complementary.

The Atomic Protocol tells you “how true this thing is.”

The Calibration Triangle tells you “whether this scheme is worth trying.”

Why This Matters Especially in the AGI Era

Future AGI will generate vast amounts of “seemingly true” content — fluent, confident, and structurally complete.

But it cannot answer three key questions for you:

Does this logic hold in my actual situation?

Can I execute this suggestion right now?

What is its level of confidence?

These questions require human calibration — your experience, your on-the-ground observation, your tolerance for risk.

The Calibration Triangle is an interface protocol for effective human–AGI collaboration:

You provide real context and value judgment.

AGI provides options and analysis.

Together, you move closer to “good enough” answers, rather than letting AGI decide alone.

Conclusion: Don’t Pursue the Absolute, Pursue What’s Good Enough

We live in an imperfect information world.

Waiting for “complete truth” before acting often means missing the moment.

The Calibration Triangle is not a compromise — it is a practical method.

It acknowledges our limitations, yet refuses to abandon the responsibility of judgment.

The next time you face a claim that cannot be fully verified, or a proposal without full certainty, pause and ask yourself three questions:

1. Is the logic sound?

2. Can it be applied to the current problem?

3. What is its level of refinement?

If all three answers are acceptable, that is often enough to act.

The rest can be calibrated along the way.

(Note: Behind this hotel case lies a deeper “standpoint” issue — why a scheme that seems perfect from an engineer’s perspective is often rejected in commercial reality. We will explore this further in Meta Series 05: Standpoint Evolution Theory.)

Upcoming

Meta Series 03: Atomic Protocol — How to Calculate Truth_Score

Meta Series 04: Synchronization Rate — Thresholds and Practice of Human-AI Collaboration

Meta Series 05: Standpoint Evolution Theory — No Right or Wrong, Only Generation and Resolution (In preparation)

Meta Series 02:校準三角 —— 當真實不可得,我們用什麼替代?

在進入「校準三角」之前,建議先閱讀本系列的前置篇章:

Meta Series 01:AI 視覺——那些 AI 看不見的真實

一、問題:我們從不擁有完全真實

從蘇美爾人的泥板到今天的 AI 輸出,人類從未擁有過「完全真實」的記錄。

歷史是篩選過的,新聞帶有立場,數據是抽樣的,連我們的記憶都會被時間扭曲。

但我們仍然需要做決策:買什麼、信誰、怎麼行動。

當「完全真實」不可得,我們用什麼替代?

答案不是放棄判斷,而是校準。

二、校準三角:三個問題,代替一個答案

我們在實踐中摸索出一套簡單框架,叫「校準三角」。

它不問「這是真的嗎?」,而是問三個更實際的問題:

- 邏輯架構通不通?

給定同樣的前提,推導是否自洽?如果換一個前提,結論會如何變化?是否存在內部矛盾? - 它能應用在當前的問題上嗎?

這個模型或建議,是否能幫助我們做出具體決策、設計可行行動、預測可能後果?還是只停留在「聽起來有道理」? - 它的完善度有多少%?

我們不要求 100%。

我們只要求一個夠用的信心區間。(例如:有 1 個現場憑證 = 20% 基礎分,有 3 個獨立節點交叉驗證 = 60% 信心區間)

這個區間來自:有多少現場憑證?經過多少次校準?與多少個獨立節點交叉驗證?

這三個問題,構成了一個替代「絕對真實」的實用判斷框架。

三、一個真實案例:酒店 AI 自動 HR 面試系統

一位朋友想向酒店推一套 AI 自動 HR 面試公式,聲稱能用數據校準求職者與崗位的匹配度。

酒店老闆沒有採納。為什麼?

用校準三角來分析:

- 邏輯架構:公式本身可能自洽,但它假設「人的情商與服務態度可以被離線數據準確預測」——這個前提並未經過該酒店實際驗證。

- 應用性:酒店當前的最大痛點是訂房率下滑和客源不足,而不是招不到人。方案與眼前問題脫節。

- 完善度:沒有使用該酒店的歷史面試數據,也沒有對現有員工績效進行校準,公式的完善度接近於零。

酒店老闆的拒絕是理性的。不是 AI 無用,而是方案沒有經過真實校準。

四、校準三角與原子協議的關係

你可能會問:這跟我們之前討論的「原子協議」有什麼分別?

原子協議主要用來檢測產品或資訊的真實度。

校準三角則是用來評估決策或方案的可行性。

原子協議關注的是材料、過程、以及它們之間的連繫。

校準三角關注的是邏輯、應用性,以及完善度。

原子協議的輸出是 Truth_Score(0–100)。

校準三角的輸出是「是否值得行動」的判斷。

原子協議適用於產品驗證、內容檢查、供應鏈驗證等場景。

校準三角適用於商業決策、方案選擇、風險評估等場景。

兩者是互補的。

原子協議告訴你「這個東西有多真」。

校準三角告訴你「這個方案值不值得試」。

五、為什麼這對 AGI 時代特別重要?

未來的 AGI 會生成大量「似真」的內容——流暢、自信、結構完整。

但它無法替你回答三個關鍵問題:

這個邏輯在我的實際處境下通嗎?

這個建議我現在能執行嗎?

它有多少%的把握?

這些問題,需要人類的校準——你的經驗、你的現場觀察、你對風險的承受度。

校準三角就是一個讓你與 AGI 有效協作的接口:

你提供真實上下文與價值判斷,AGI 提供選項與分析,雙方一起逼近「夠用」的答案,而不是讓 AGI 單獨決定。

六、結語:不追求絕對,追求夠用

我們生活在一個資訊不完美的世界裡。

等待「完全真實」才行動,往往會錯過時機。

校準三角不是妥協,而是務實的方法。

它承認我們的能力有限,但拒絕放棄判斷的責任。

下次面對一個無法完全驗證的宣稱、一個沒有十足把握的方案時,停下來問自己三個問題:

- 邏輯通不通?

- 能不能用在現在的問題上?

- 完善度有多少%?

如果三個答案都還過得去,那就足夠行動了。

剩下的,邊做邊校準。

(本文案例背後,其實還隱藏著一個更根本的「立場」問題——為什麼工程師眼中完美的方案,在商業現實中卻常常被拒絕?我們將在 Meta Series 05「立場演化論」中繼續探討。)

後續預告

Meta Series 03:原子協議 —— 如何計算 Truth_Score

Meta Series 04:同步率 —— 人機協作的門檻與練習

Meta Series 05:立場演化論 —— 沒有對錯,只有生成與解決(籌備中)