Case 25 | Ghost GDP and Government Counter-Cycles

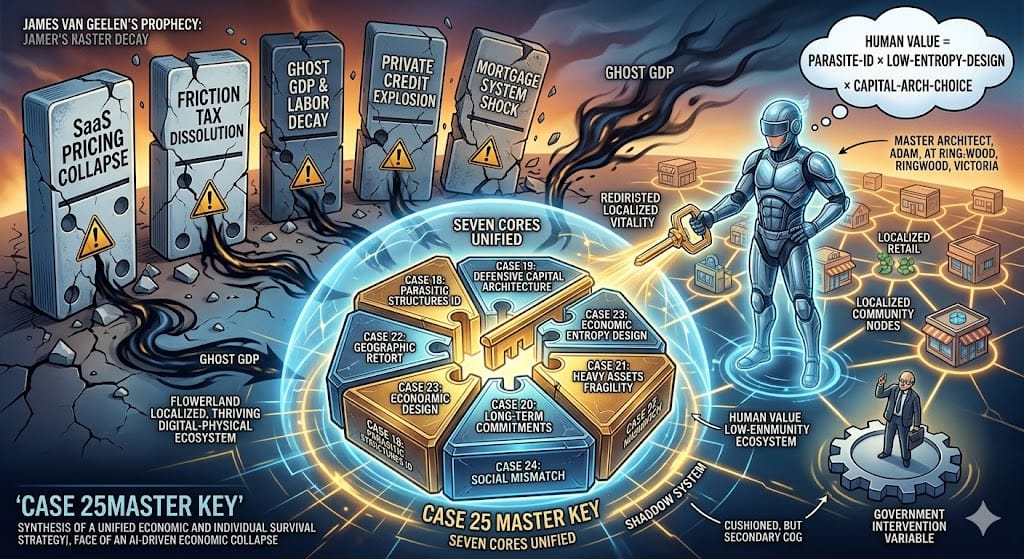

In early 2026, three seemingly independent events emerged on the timeline, yet all pointed to the same core issue: the full-scale expansion of AI was reshaping society, the economy, and individual value. Former hedge fund operator James Van Geelen published a projection suggesting that if AI fully met optimistic expectations, productivity could leap to 1950s levels—but the global economy might collapse entirely. He broke down the crisis into five dominoes: the collapse of SaaS pricing power, the disappearance of friction rents, Ghost GDP, private credit defaults, and destabilization of the mortgage system. On the surface, stock prices and corporate profits soared, but labor income declined, consumer purchasing power shrank, and social structures were destabilized by AI’s chain replacement effects.

At the same time, Naval Ravikant’s lecture offered a countermeasure perspective. He argued that labor would be replaced by AI, but humans’ moat lies in nonlinear judgment—the ability to make decisions and be accountable for outcomes. True specialized knowledge comes from innate talent, curiosity, and deep immersion in specific domains. The “individual company” model—a single person plus AI—can achieve the output of fifty people in the past. This represents a defensive capital architecture: mastering judgment and core logic systems is key to survival in the AI era. Adding the AI Agent case provides operational proof: automation is no longer mere conversation; it involves goal setting, cross-platform autonomous execution, continuous calibration, and self-evolving systematic capabilities—demonstrating that judgment and architecture design can directly translate into actionable outcomes.

Mapping these three signals onto RealityCheck’s seven cases, we can see the structural patterns of the AI era. Case 18 shows that many fragments of daily life are being harvested—SaaS pricing, credit card fees, insurance renewals, and similar mechanisms are “system taxes” parasitic on human inertia and systemic complexity. When AI dismantles complexity and eliminates friction, these parasitic structures collapse, corresponding to James’ first and second dominoes. Case 23’s energy entropy perspective explains how capital and labor are used to maintain the system itself rather than generating real consumption value; Ghost GDP is a concrete manifestation of this economic entropy. Case 19 translates Naval’s ideas into a capital architecture model: the individual company is a defensive structure, using judgment, specialized knowledge, and core logic systems to resist AI replacement. Cases 20–24 provide further empirical validation: from long-term payment obligations, asset fragility, and geographic structure and retail gravity, to mismatches in education, health, and social demand—each layer makes James’ and Naval’s observations tangible and measurable.

Integrating these cases reveals a clear path: systemic parasitic structures are dismantled by AI, energy and capital flow to computing power, labor income declines, and Ghost GDP spreads. Individuals who fail to reposition themselves risk structural obsolescence. Naval’s framework—judgment, specialized knowledge, and the individual company—provides a transformative direction: moving from passive labor to AI-era nodes, from the system periphery to its core.

Yet individual capacity is limited, which underscores the importance of government intervention. Case 25 proposes that when AI leads to concentrated profits and stagnant consumption, governments can redirect energy back to humans through AI taxes, robot taxes, universal basic income, workforce retraining, and infrastructure investment. Successful intervention buffers the negative effects of Ghost GDP; failed intervention erodes the social contract, accelerates structural collapse, and raises both public safety and economic risks.

Bringing it together, we derive the optimized formula:

Human Value = Ability_{Parasite-ID} × Ability_{Low-Entropy-Design} × Choice_{Capital-Arch}

Recognizing parasitic structures allows us to understand the logic behind SaaS collapse and disappearing friction rents. Designing low-entropy systems ensures energy flows back to humans. Choosing the right capital architecture guarantees that individuals in the AI era are not eliminated but instead become nodes and system architects. James’ crisis projections, Naval’s directional guidance, and AI Agent operational examples provide empirical verification, while government intervention acts as an external regulator to prevent structural imbalances from spinning out of control.

This is more than a framework for observing problems; it is an actionable, calibratable AI-era survival system. It integrates insights from the cases, validation from external signals, and potential policy interventions, forming a complete survival toolkit—from crisis to direction, from countermeasures to architecture—providing clear operational guidance for humans in the era of Ghost GDP.

Case 25:幽靈 GDP 與政府的逆週期

2026 年初,三個看似獨立的事件在時間軸上同時浮現,卻指向了同一個核心問題:AI 的全面擴張,正在改寫社會、經濟與個體價值。前多頭操盤手 James Van Geelen 發表的推演指出,如果 AI 完全達成樂觀預期,生產力躍升到 1950 年代級別,但世界經濟可能徹底崩潰。他將危機拆成五塊骨牌:SaaS 定價權崩潰、摩擦稅消失、幽靈 GDP、私人信貸爆雷,以及房貸體系動搖。表面上股價與企業利潤在飆升,但勞動收入下滑,消費力萎縮,社會結構因 AI 的連鎖取代而失衡。

同時,Naval Ravikant 的演講提供了一種對策性的視角。他指出,勞動力將被 AI 取代,但人類的護城河是非線性判斷力——能做決策並為結果負責。真正的特殊知識來自天賦、好奇心與對特定領域的深度沉浸,而個體公司模式——一人加上 AI——可以達到過去五十人的產出。這是一種防禦型資本架構:掌握判斷力與核心邏輯系統,就是在 AI 時代生存的關鍵。再加上 AI Agent 的案例,它展示了操作層面的實證:自動化不再只是對話,而是設定目標、跨平台自動運行、持續校準與自我進化的系統化能力,證明判斷力與架構設計可以直接轉化為實際行動。

把這三個信號放到 RealityCheck 的七個案例上,我們可以看到 AI 時代的結構模式。Case 18 告訴我們,很多生活碎片背後其實被收割了,SaaS 定價、信用卡手續費、保險續約等都是寄生在人類惰性與系統複雜性上的「系統稅」。當 AI 拆解複雜性、摩擦被消除,這些寄生結構就會崩潰,對應 James 的第一與第二塊骨牌。Case 23 的能源熵視角說明了資本與勞動如何被用於維持系統本身,而非創造真實消費價值,幽靈 GDP 正是這種經濟熵的具體表現。Case 19 將 Naval 的理念轉化為資本架構模型:個體公司是一種防禦型架構,靠判斷力、特殊知識與核心邏輯系統抵抗 AI 的取代。Case 20–24 則提供了更多現實印證:從長期承諾的支付負擔、重資產的脆弱性、地緣結構與零售重力,到教育、健康和社會需求的不匹配,每一層都讓 James 和 Naval 的觀察變得可見、可量化。

把這些案例整合後,我們看到清楚的路徑:系統性寄生結構被 AI 拆解,能源與資本流向算力,勞動收入下降,幽靈 GDP 蔓延。個體若不重新定位,就會被結構性淘汰。而 Naval 提出的判斷力、特殊知識與個體公司,提供了轉型的方向:從被動勞動力轉向 AI 時代的節點,從系統外圍轉入系統核心。

然而,個體的能力有限,這就是政府介入的重要性。Case 25 提出,當 AI 導致利潤集中、消費停滯時,政府可以用 AI 稅、機械人稅、全民基本收入、就業再培訓與基建投資,把能量重新導回人類端。成功介入,幽靈 GDP 的負面效應就能被緩衝;失敗介入,社會契約鬆動,結構性崩潰加速,治安與經濟風險同步上升。

整合起來,我們得到優化公式:人類價值 = 識別寄生結構能力 × 設計低熵系統能力 × 選擇正確資本架構能力。識別寄生結構讓我們理解 SaaS 崩潰與摩擦稅消失背後的邏輯,設計低熵系統讓能量回流到人類端,而正確的資本架構選擇確保個體在 AI 時代不被淘汰,而是成為節點與架構師。James 的危機預言、Naval 的方向指引與 AI Agent 的操作示例,提供了現實驗證,而政府介入則作為外部調節器,保證結構性失衡不至於失控。

這不僅是一個觀察問題的框架,更是一個可操作、可校準的 AI 時代生存系統。它把案例中的洞察、外部信號的驗證與政策介入的可能性整合起來,形成完整的生存工具箱——從危機到方向,從對策到架構,為人類在幽靈 GDP 時代提供清晰的行動方案。