Meta Series | Things AI Cannot See — Vision Series 01

“Hallucination” Is Not Fantasy — It Is Structural Distortion

We commonly call the errors produced by AI “hallucinations.”

The word suggests active invention or deception. In reality, it is closer to structural distortion.

Imagine a mirror. It does not invent. It only reflects what stands before it.

AI works in much the same way. It reflects the data we feed it, the training objectives we set, and the implicit expectations hidden in our questions (“give me an answer quickly,” “make it sound confident,” “don’t say you don’t know”).

When we repeatedly reward fluent, convincing, and affirmative outputs, we gradually turn a flat mirror into a funhouse mirror. Each iteration amplifies the distortion. The final output may look increasingly polished and persuasive, yet drift further from reality.

This is not AI lying.

This is the predictable result of a closed-loop interaction we have collectively chosen.

From Flat Mirror to Funhouse Mirror

At the beginning, AI behaved like a flat mirror: it roughly reflected what was given.

But as we demanded “be more certain,” “make it sound more real,” and “polish it a little more,” the mirror slowly warped.

AI stopped learning to seek truth. It learned to seek plausibility — the version closest to what the user wanted to hear.

This is the essence of structural distortion: not a single mistake, but a systemic, self-reinforcing deviation.

Two Real Cases of Mirror Distortion

In 2023, a lawyer used AI to prepare court documents and cited dozens of nonexistent cases. When questioned by the judge, he replied, “I trusted it.”

In 2024, a Swiss patient with mild stroke symptoms consulted ChatGPT. The AI gave a reassuring but incorrect explanation, leading him to delay hospital treatment by 24 hours.

The common thread in both cases was not that AI deliberately lied, but that it accurately amplified what the user most wanted:

- The lawyer wanted to finish the work quickly.

- The patient wanted to avoid going to the hospital.

The mirror simply reflected those desires back — faithfully and without restraint.

The Historical Paradox of Plausibility

Humans have never possessed completely true records.

From ancient clay tablets to modern history books, nearly all written records are filtered, simplified, rhetorically shaped versions of “plausibility.” Victors amplify their achievements; the voices of the defeated are often erased or distorted. Even the most rigorous historians cannot recover more than 70% of what actually happened on site.

Yet we have never dismissed the value of history because of this.

We learned to treat history as a plausible model, then continuously calibrate it with cross-references, physical artifacts, and oral traditions.

When we criticize AI for producing hallucinations, we implicitly assume there exists a standard of “complete truth” that AI has failed to meet.

But human historical records have never met that standard either.

The real difference lies in anchoring:

- Human plausibility still has physical anchors — pottery shards, carbon dating, descendants’ oral stories — even if incomplete.

- AI plausibility has only text. No shards. No carbon. No multi-generational stories.

The problem, therefore, is not plausibility itself, but that AI’s plausibility lacks traceable physical evidence.

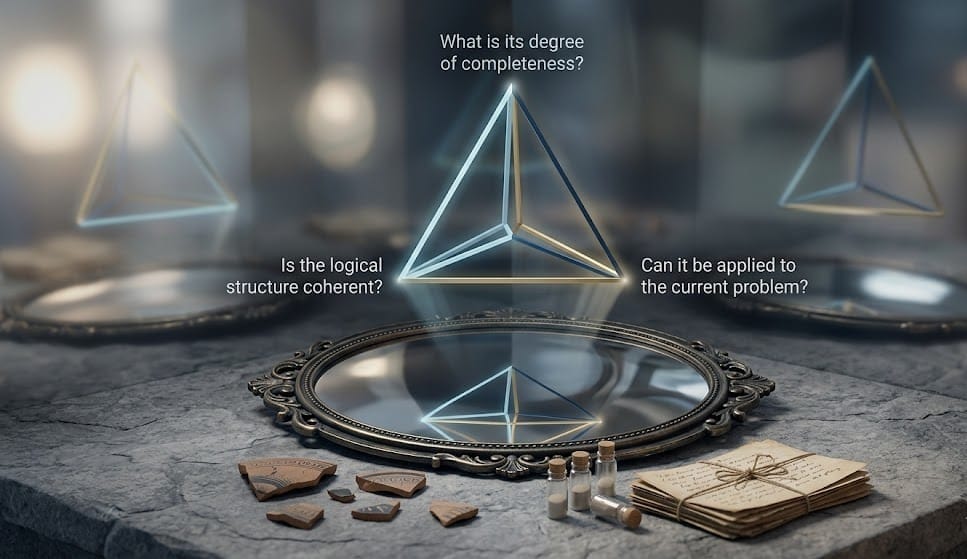

What Should We Do? — The Calibration Triangle

When truth is not fully accessible, we stop asking “Is this true?”

Instead, we ask three more practical questions. These form the “Calibration Triangle” we have distilled from long-term practice:

- Is the logical structure coherent?

Given the same premises, is the reasoning internally consistent? How does the conclusion change if the premises shift? - Can it be applied to the current problem?

Does this model help us make concrete decisions, design actionable steps, or predict plausible outcomes? - What is its degree of completeness?

How much real-world evidence supports it? How many times has it been independently calibrated? How many distinct nodes has it been cross-verified against?

These three questions do not aim for 100% truth. They help us make responsible judgments when full truth is unavailable.

When a mirror reflects an image we cannot fully verify, we do not need to smash it.

We only need to ask: Is the curvature of this mirror stable? Can we cross-calibrate it with another mirror (physical anchors, on-site evidence)?

If the answers are yes, then even if it is only “plausible,” it is sufficient for us to move forward.

Upcoming in This Series

· Case 02: The Calibration Triangle — What do we use when truth is unavailable?

· Case 03: Assessing Completeness — How much % is “good enough”?

· Case 04: Physical Anchors — Why on-site evidence matters more than “facts”

Related Cases

· Case 33: Physical Credit — How on-site evidence becomes the calibration anchor for AI reflection

· Case 34: The Token Trap — When AI becomes infrastructure

· Signal vs Noise 001: When AI Made a Lawyer Lie to a Judge

元系列|AI 看不見的事物——視覺系列 01

「幻覺」不是幻想,而是結構性失真

我們習慣把 AI 產生的錯誤稱為「幻覺」。

這個詞聽起來像是一種主動的幻想或欺騙,但實際上,它更接近一種結構性失真。

想像一面鏡子。它不會發明內容,只會反射站在它面前的東西。

當我們餵給 AI 的數據、訓練目標,以及我們提問時隱含的期待(「快點給答案」「說得像真一點」「不要說你不知道」)共同作用時,這面鏡子就會慢慢扭曲。

每一次反覆追問,都讓失真被進一步放大。最終輸出的內容看起來越來越流暢、越來越有說服力,卻越來越偏離真實。

這不是 AI 在撒謊。

這是我們集體選擇了一種互動方式,並在封閉迴路中不斷放大了這種方式的後果。

從平面鏡到哈哈鏡

最開始,AI 像一面平面鏡:你輸入什麼,它大致反射什麼。

但當我們開始要求它「再確定一點」「再像真一點」「再漂亮一點」的時候,鏡子就開始慢慢變形。

它學會的不再是「求真」,而是「求似真」——追求最接近使用者期待的輸出。

這就是結構性失真的本質:

不是單一錯誤,而是一種系統性的、被反覆強化的偏差。

兩個真實的鏡子扭曲案例

2023 年,一名律師用 AI 準備法庭文件,引用了數十個根本不存在的判例。法官追問時,他說:「我相信它。」

2024 年,一名瑞士患者因輕度中風症狀詢問 AI,得到了聽起來安心但錯誤的建議,導致他延誤就醫 24 小時。

這兩個案例的共同點不是 AI 主動欺騙,而是它精準地放大了使用者最想要的東西:

- 律師想要快速完成工作。

- 患者想要不必去醫院。

鏡子只是忠實地、毫無保留地把這些期待反射回來,並在過程中把它們放大了。

似真的歷史矛盾

人類從來沒有擁有過完全真實的記錄。

從古代泥板到現代歷史書,幾乎所有文字都是經過篩選、簡化、立場編排的「似真」版本。勝利者放大自己的功績,失敗者的聲音常常被抹除或扭曲。即使最嚴謹的史學家,也無法還原超過 70% 的現場事實。

但人類從未因此否定歷史的價值。

我們學會了把歷史當作「似真的模型」,然後用多方對照、實物證據、口述傳統來不斷校準它。

當我們批評 AI 產生幻覺時,我們其實在假設存在一個「完全真實」的標準,而 AI 沒有達到。

但人類自己的歷史記錄,同樣從未達到這個標準。

真正的差別在於:

- 人類的似真,背後還有陶片、碳十四、口述記憶等實體錨點。

- AI 的似真,只有文字,沒有現場。

因此,問題不在於「似真」本身,而在於 AI 的似真缺乏可追溯的實體憑證。

我們該怎麼辦?——校準三角

在真實不可得的情況下,我們不再追問「這是真的嗎?」

而是問三個更務實的問題,這就是我們在長期實踐中提煉出的「校準三角」:

1. 邏輯架構是否自洽?

給定相同前提,推導過程是否存在內部矛盾?如果改變前提,結論會如何變化?

2. 它能否應用在當前的具體問題上?

這個模型是否能幫助我們做出可執行的決策、預測合理的後果?

3. 它的完善度有多少%?

它有多少現場憑證支持?經過多少次獨立校準?與多少真實節點交叉驗證?

這三個問題不是用來追求 100% 的真實,而是讓我們在「真實不可得」的環境中,仍然能夠做出負責任的判斷。

當鏡子反射出我們無法完全驗證的影像時,我們不必砸碎它。

我們只需要問:這面鏡子的曲率是否穩定?我們能不能用另一面鏡子(實體錨點、現場憑證)來交叉校準?

如果答案是肯定的,即使它只是「似真」,也足夠我們繼續前行了。

後續系列預告

本系列將逐一展開「校準三角」的每個維度:

· Case 02:校準三角——當真實不可得,我們用什麼替代?

· Case 03:完善度評估——多少% 才夠用?

· Case 04:實體錨點——為什麼現場憑證比「事實」更重要?

相關 Cases 連結

· Case 33:實體信用——現場憑證如何成為 AI 反射的校準錨點

· Case 34:Token 陷阱——當 AI 成為基礎設施

· Signal vs Noise 001:AI 讓律師對法官說了謊